By Lili Kazemi | Founder, The Human Edge of AI

Artificial intelligence is no longer defined by a small number of centralized models competing for dominance.

The ecosystem has fragmented and democratized rapidly. Open models, specialized systems, enterprise deployments, ecosystem-native assistants, and memory-first architectures are all evolving simultaneously. As AI becomes more distributed, the real strategic question is no longer simply intelligence.

It is sovereignty.

Who controls the data, workflows, reasoning structures, and memory layers that increasingly shape how organizations operate and how individuals interact with technology?

This article argues that the next major AI battleground is not just the model itself, but the persistent memory layer behind it — the accumulated context, workflows, and behavioral continuity that make AI systems operationally valuable over time.

The End of the Blank Slate

In 2026, artificial intelligence is no longer competing solely on parameter counts or benchmark scores.

It is competing on who knows you best.

The industry has shifted from stateless assistants to systems that accumulate context over time through persistent interaction, workflow history, and behavioral patterns.

What emerges from that accumulation is what I call the Digital Soul.

Not the model.

Not the interface.

Not the chatbot.

The memory layer.

The persistent record of preferences, workflows, institutional knowledge, and decision patterns that makes an AI environment uniquely valuable to a person or organization.

That is the real battleground now.

And increasingly, it is becoming an operational and governance issue—not just a product feature.

Sovereign Memory

This is where a new governance principle emerges.

I call it Sovereign Memory.

Sovereign Memory is the principle that organizations must retain operational, contractual, and technical control over the memory accumulated by AI systems they depend upon.

The core idea is simple:

Your AI model may be replaceable.

Your memory layer may not be.

As AI systems become more persistent, more personalized, and more deeply integrated into workflows, memory itself is becoming strategic infrastructure.

And once that happens, ownership and portability matter just as much as intelligence.

Context Is Not Memory

One of the biggest conceptual mistakes in AI discussions is treating context and memory as interchangeable.

They are not the same thing.

The context window is short-term working memory. It allows an AI system to process information during an active session.

Persistent memory is long-term knowledge.

It stores:

- preferences

- workflows

- historical interactions

- organizational patterns

- behavioral tendencies

Context powers execution.

Memory compounds value.

For years, most AI systems were essentially stateless. Every interaction started from zero.

That era is ending.

AI is becoming stateful infrastructure.

And once systems retain institutional knowledge over time, the governance implications change dramatically.

The Real Battleground: The Persistent Memory Layer

The competitive frontier is no longer just model capability.

It is ownership and control over the persistent memory layer.

This is where:

- enterprise workflows become encoded

- operational habits are reinforced

- internal knowledge compounds

- decision patterns become embedded

In other words, this is where AI stops being a novelty and becomes infrastructure.

Some companies treat memory as an internal platform feature.

Others are experimenting with interoperability, structured export systems, and ecosystem-based persistence.

But underneath all of these approaches sits the same strategic question:

Who owns the memory?

Because whoever controls the memory controls continuity, switching costs, and long-term value creation.

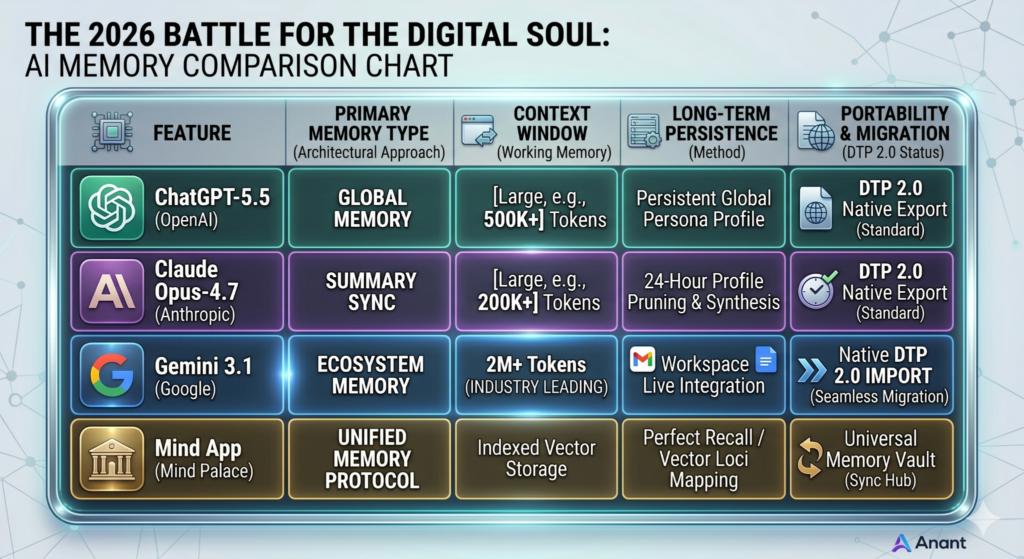

Competing Memory Architectures

The major AI ecosystems are developing fundamentally different approaches to memory and continuity.

And those differences matter because they shape:

- portability

- governance

- operational dependency

- enterprise resilience

The next phase of AI competition is not just about intelligence.

It is about how memory is accumulated and controlled.

ChatGPT: Persistent Conversational Identity

OpenAI and ChatGPT increasingly approach memory as an evolving identity layer.

The system retains preferences, recurring themes, and long-term interaction patterns to create continuity and personalization.

The advantage is frictionless persistence.

The tradeoff is that accumulated continuity becomes increasingly embedded within the platform itself.

OpenAI and ChatGPT have spent years constructing the foundations of this paradigm, utilizing retained histories and preference layers as the nascent infrastructure of persistence. With the advent of the 2026 memory retrieval framework, this architectural continuity has become explicit—shifting the model from a stateless, blank-slate processor to a stateful system that actively integrates historical interaction patterns to simulate a persistent conversational identity.

That raises an important governance question:

How portable is that identity layer once it becomes operationally valuable?

Gemini: Ecosystem Memory

Google and Gemini appear to approach memory differently.

Rather than anchoring continuity solely inside the chatbot, the model increasingly draws context from the broader Google ecosystem:

- Gmail

- Docs

- Calendar

- Drive

- Workspace integrations

The result is less of a standalone assistant and more of an ecosystem-native intelligence layer.

The advantage is integration and depth.

The risk is ecosystem dependency.

Claude: Structured Context and Controlled Continuity

Anthropic and Claude have historically emphasized large context handling and structured reasoning rather than aggressively persistent consumer memory.

Its approach feels more session-centric than identity-centric.

The advantage is controlled reasoning and reduced dependency on persistent behavioral profiling.

The tradeoff is potentially less seamless continuity across long-term workflows.

Mind AI: The Renegade Memory Layer

Mind AI is experimenting with a different approach entirely.

Rather than treating memory primarily as conversational persistence, Mind AI focuses on structured symbolic reasoning through what it calls “Canonicals” — traceable knowledge structures designed to organize reasoning itself.

The result is a memory architecture that emphasizes:

- explainability

- symbolic structure

- reasoning traceability

- compartmentalized knowledge layers

Conceptually, this creates a different vision of continuity:

not just remembering what you said,

but preserving how knowledge is organized.

That distinction could become increasingly important for enterprise governance, auditability, and regulated environments.

None of these approaches are inherently right or wrong.

But they are not equivalent from a governance perspective.

Because once AI becomes infrastructure, one question becomes unavoidable:

Can you move the memory?

The Rise of Memory Portability

Projects like the Data Transfer Project and broader interoperability initiatives are pushing the industry toward more portable data environments where users can increasingly:

- export interaction histories

- transfer structured datasets

- migrate workflows

- reconstruct behavioral context across systems

There is still no universal standard for AI memory portability.

But the direction is increasingly clear.

Memory is becoming:

- extractable

- transferable

- economically valuable

And once memory becomes economically valuable, governance follows.

From Consumer Convenience to Enterprise Risk

For enterprises, this is no longer just a user experience issue.

It is a governance issue.

Where does the operational knowledge of your AI-enabled workforce actually reside?

Who controls it?

Who can export it?

And what happens if access disappears?

On April 7, 2026, the National Institute of Standards and Technology (NIST) released a concept note tied to its evolving AI Risk Management Framework efforts focused on trustworthy AI in critical infrastructure environments.

The note does not create new mandates.

But it signals where governance expectations are heading:

- lifecycle risk management

- operational resilience

- dependency mapping

- system-level accountability

The document does not explicitly discuss “memory lock-in.”

But once AI systems become continuously evolving, data-dependent operational environments, the next governance question becomes obvious:

What happens when the memory itself becomes mission critical?

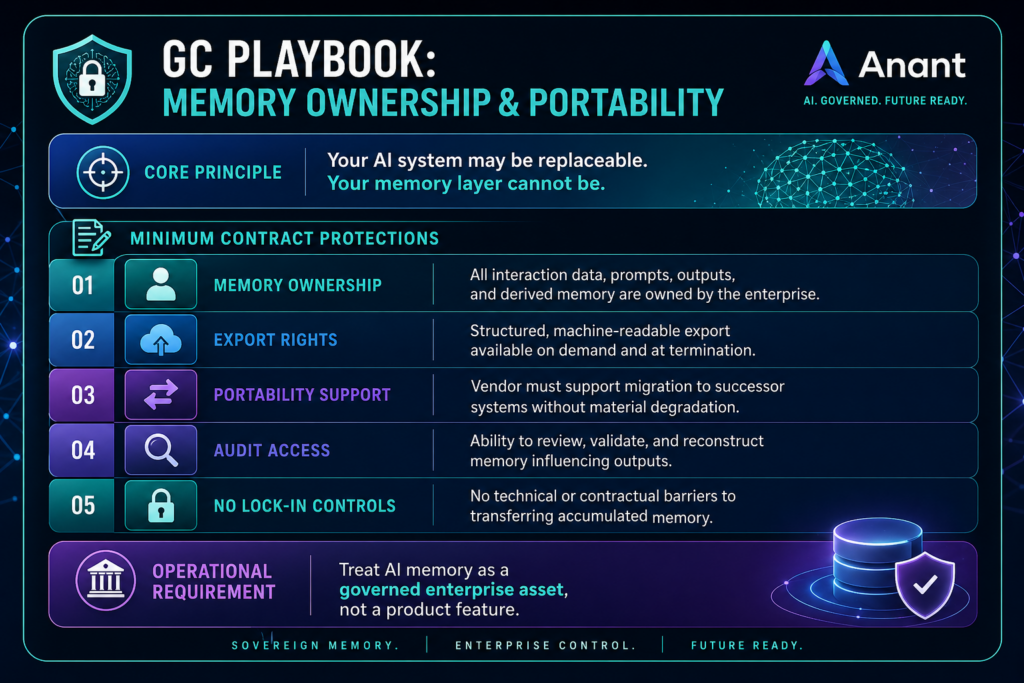

The General Counsel Playbook

For General Counsel and enterprise leadership teams, Sovereign Memory cannot remain theoretical.

It has to become operational.

As AI systems accumulate:

- prompts

- outputs

- workflow logic

- retrieval structures

- institutional knowledge

- behavioral patterns

that memory becomes operational infrastructure.

Not just application data.

That means enterprise AI governance frameworks should address five foundational protections:

1. Memory Ownership

Contracts should explicitly state that prompts, outputs, interaction histories, and derived workflow intelligence remain enterprise-owned.

2. Export Rights

Organizations should retain the ability to export AI memory and interaction data in structured, machine-readable formats.

3. Portability Support

Vendors should support migration to successor systems without material degradation of operational memory.

4. Audit Access

Organizations need visibility into retained histories, retrieval systems, and memory reconstruction mechanisms influencing outputs.

5. No Lock-In Controls

Contracts should prohibit technical or contractual barriers that prevent organizations from transferring accumulated memory into new environments.

Because while models evolve rapidly, institutional memory compounds over time.

And trapped memory creates operational dependency.

The Tactical Shift for Enterprises

The strategic move in 2026 is not selecting the “best” model.

It is designing architectures where the model itself becomes replaceable.

That means:

- negotiating portability provisions into vendor agreements

- classifying AI interaction history as enterprise data

- designing workflows independent of single providers

- aligning with interoperability standards where possible

Because once memory compounds over time, switching costs rise exponentially.

The durable asset is not necessarily the model.

It is the memory layer sitting behind it.

Final Thought

For the last several years, the dominant question in AI has been:

What can the model do?

But the more important question now may be:

What does the system remember—and who controls that memory?

Because memory is no longer just a feature.

It is becoming infrastructure.

And if you cannot take that memory with you, you may not fully control your own AI future.

***

Lili Kazemi is General Counsel and AI Policy Leader at Anant Corporation, where she advises on the intersection of global law, tax, and emerging technology. She brings over 20 years of combined experience from leading roles in Big Law and Big Four firms, with a deep background in international tax, regulatory strategy, and cross-border legal frameworks. Lili is also the founder of DAOFitLife, a wellness and performance platform for high-achieving professionals navigating demanding careers.

Follow Lili on LinkedIn and X

👇 Subscribe to Lili’s newsletter, the Human Edge of AI, to get AI from a legal, policy, and human lens.

Subscribe on LinkedIn