By Lili Kazemi | Founder, The Human Edge of AI

NIST has always mattered.

But in December 2025, it became unavoidable.

The National Institute of Standards and Technology (NIST) — the U.S. Department of Commerce agency responsible for developing widely adopted security standards — released updates that quietly changed the posture of cybersecurity governance in the AI era.

If you are building, deploying, or overseeing AI systems, this was not a routine publication cycle.

It was a structural shift.

You can read the core framework here:

👉 https://doi.org/10.6028/NIST.CSWP.29

And the December AI-focused update here:

👉 https://doi.org/10.6028/NIST.IR.8596.iprd

But the deeper story is what those documents mean together.

NIST Is Not a Regulator — But It Sets the Baseline

NIST does not fine companies. It does not bring enforcement actions.

Yet its frameworks become the operational backbone for:

- Federal procurement

- Sector-specific cybersecurity expectations

- Insurance underwriting standards

- Board-level governance benchmarks

- “Reasonable security” analysis in litigation

NIST standards become law-adjacent through adoption.

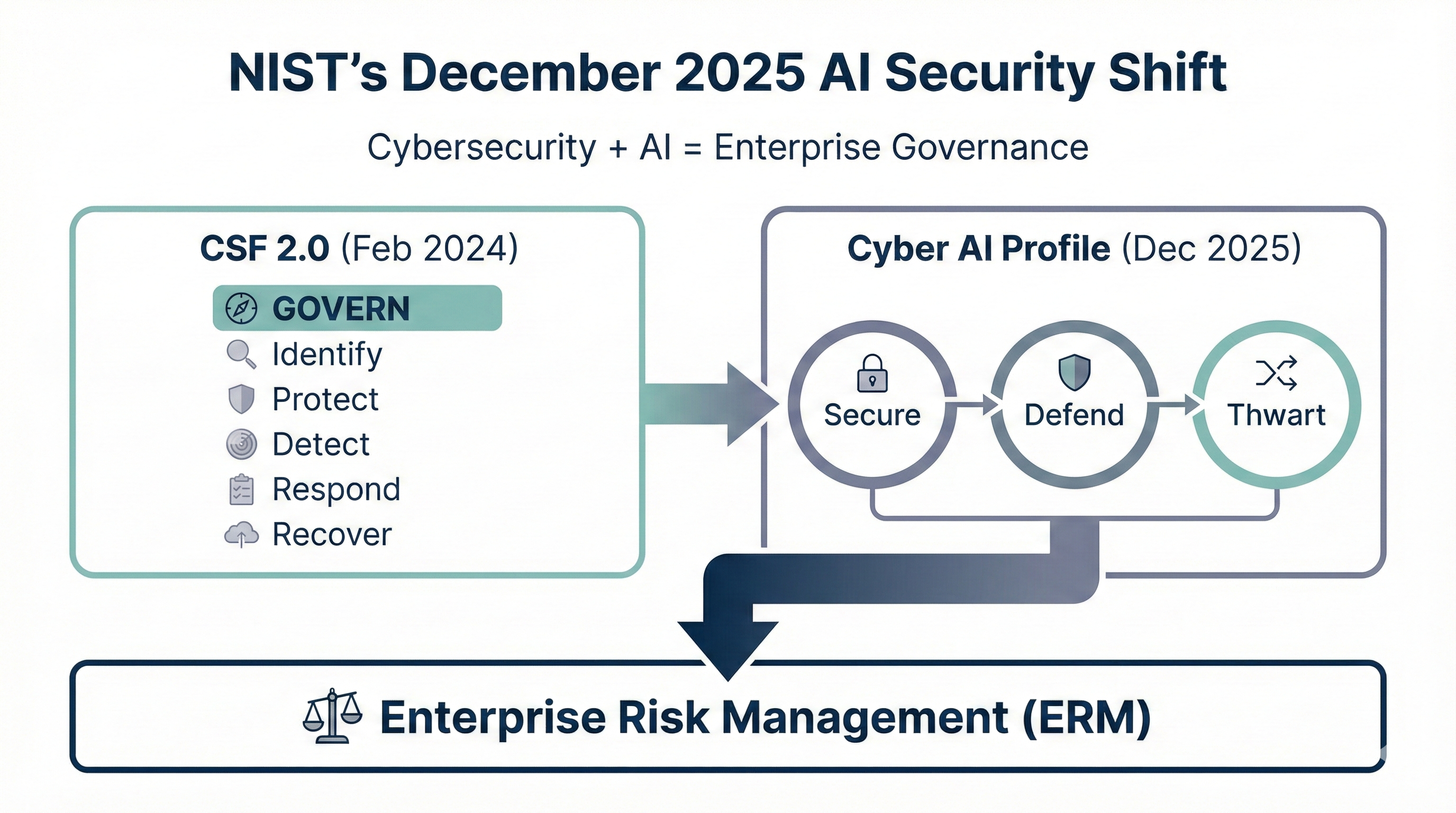

That’s why the February 2024 release of the Cybersecurity Framework 2.0 was already important.

It added a sixth function: Govern.

And it made governance the starting point.

You can review CSF 2.0 here:

👉 https://doi.org/10.6028/NIST.CSWP.29

Governance is no longer implied.

It must be documented.

Then Came December 2025

In December 2025, NIST released a preliminary draft Cybersecurity Framework Profile for Artificial Intelligence — commonly referred to as the “Cyber AI Profile.”

Read the draft here:

👉 https://doi.org/10.6028/NIST.IR.8596.iprd

This was not a separate AI framework. It was a profile built directly on CSF 2.0.

That distinction matters.

NIST is signaling that AI security is not a niche problem.

It is a cybersecurity governance problem.

The draft profile introduces three overlapping focus areas:

- Secure AI systems

- Defend with AI

- Thwart AI-enabled attacks

That last point is where many organizations are behind.

AI is not just another asset to protect.

It is a force multiplier — for defenders and attackers alike.

The ERM Integration Signal

Two days after the AI profile announcement, NIST revised its cybersecurity-to-enterprise-risk integration guidance in the IR 8286 series.

See the updated series here:

👉 https://doi.org/10.6028/NIST.IR.8286r1

This is where governance becomes real.

The IR 8286 updates align cyber risk management directly with enterprise risk management. Cyber risk is framed as an input to strategic decision-making, not as a technical cost center.

In plain English:

Cybersecurity is now a board-level risk architecture question.

Not just a security tools question.

What This Means in Practice

The December 2025 updates do not create new law.

They create new expectations.

If your organization deploys AI, NIST now expects you to think in three dimensions:

- Are your AI system components secure?

- Are you using AI defensively in cybersecurity operations?

- Are you prepared for adversaries who use AI against you?

And all of this must map back to the Govern function of CSF 2.0.

Governance must define:

- Who owns AI security risk

- How risk is measured

- How it is reported upward

- How remediation is prioritized

- How it integrates into enterprise objectives

If you cannot answer those questions, you do not have governance maturity under CSF 2.0.

The Quiet Convergence

This shift aligns with broader regulatory currents:

- SEC cyber disclosure rules

- Global AI regulation

- Supply chain security scrutiny

- Public-sector procurement standards

NIST may not enforce. But regulators, auditors, and counterparties increasingly look to NIST as the benchmark for “reasonable.”

Which means December 2025 was not about guidance.

It was about convergence.

Cybersecurity. AI governance. Enterprise risk.

One architecture.

The Executive Question

The right question is no longer:

“Are we secure?”

The better question is:

“Can we demonstrate governance maturity for AI-aware cybersecurity under scrutiny?”

If the answer depends on informal processes, undocumented decisions, or siloed teams, the architecture is incomplete.

NIST has clarified the structure.

Now leadership must operationalize it.

Read More

When AI Creates Value, Settlements Aren’t Arm’s-Length — They’re Arms-Twist

Disney, Midjourney select Hon. Suzanne H. Segal as private neutral for mediation

Lights, Camera, Licensing: How Disney and Warner Are Rewriting the AI Playbook

AI Compliance Has a Clock. Most Companies Are Pretending It Doesn’t

Lili Kazemi is General Counsel and AI Policy Leader at Anant Corporation, where she advises on the intersection of global law, tax, and emerging technology. She brings over 20 years of combined experience from leading roles in Big Law and Big Four firms, with a deep background in international tax, regulatory strategy, and cross-border legal frameworks. Lili is also the founder of DAOFitLife, a wellness and performance platform for high-achieving professionals navigating demanding careers.

Follow Lili on LinkedIn and X

🔍 Discover What We’re All About

At Anant, we help forward-thinking teams unlock the power of AI—safely, strategically, and at scale.

From legal to finance, our experts guide you in building workflows that act, automate, and aggregate—without losing the human edge.

Let’s turn emerging tech into your next competitive advantage.

Follow us on LinkedIn

👇 Subscribe to our weekly newsletter, the Human Edge of AI, to get AI from a legal, policy, and human lens.

Subscribe on LinkedIn