By Lili Kazemi | Founder, The Human Edge of AI

A governance framework for law firms, general counsel, and sophisticated buyers

Executive Summary

AI adoption in legal services is no longer experimental. It is structural.

For law firms and legal departments, AI systems influence legal work product, touch client data, generate discoverable records, and introduce dependency risk. The real exposure does not lie in how well the model drafts. It lies in:

- Dataset provenance and licensing

- Logging and retention practices

- Infrastructure dependency

- Cross-tenant segregation

- Discoverability of prompts and outputs

- Contractual allocation of IP and privacy risk

This article provides a structured, question-driven framework you can use in vendor conversations — not to sound technical, but to avoid behaving like an AI tourist.

The Tourist Problem

An AI tourist is impressed by features.

An AI insider evaluates control.

A tourist asks:

- “What model do you use?”

- “Is it secure?”

- “How accurate is it?”

An insider asks:

- “Who owns the weights?”

- “Where does our data live?”

- “What gets logged?”

- “What survives if there’s a subpoena?”

The distinction is not about vocabulary. It is about understanding where risk accumulates.

Before You Even Begin: Are You Protected?

Before engaging deeply with any vendor, ask yourself:

- Is there an NDA in place?

- Are you prepared to avoid disclosing client-specific data?

- Are internal stakeholders aligned on what can and cannot be shared?

Before contractual protection exists, do not:

- Upload real documents

- Describe active matters

- Share proprietary prompts

- Expose internal compliance logic

Early calls are exploratory — not confidential strategy sessions.

The Insider’s Framework: What to Ask and Why It Matters

Below is the question-driven structure that separates curiosity from governance.

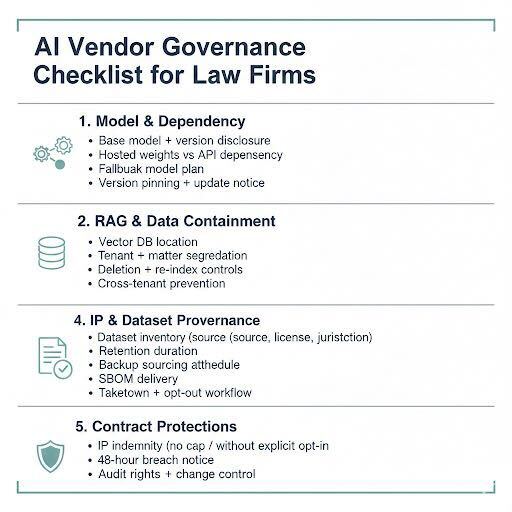

1. Model Lineage & Dependency Architecture

Ask:

- What is the base model and version?

- Have you fine-tuned it? If so, on what datasets?

- Do you host the model weights, or rely on a third-party API?

- If you rely on an upstream provider, what happens if access changes?

- Can we pin to a specific model version for reproducibility?

- How are material model updates communicated?

Why it matters:

Dependency risk is often invisible until a provider changes terms, pricing, or licensing. Model updates without version control create litigation and reproducibility exposure.

2. RAG & Knowledge Containment

Ask:

- Do you use retrieval-augmented generation?

- Where is the vector database hosted?

- Are embeddings segregated by tenant and by matter?

- Can indexed data be deleted fully and verifiably?

- Is customer data ever pooled or reused?

- What controls prevent cross-tenant leakage?

Why it matters:

For legal practice, containment is non-negotiable. Retrieval architecture determines privilege integrity.

3. Data Handling, Logging & Retention

Ask:

- What gets logged by default (prompts, outputs, metadata)?

- How long are logs retained?

- Are logs used for model improvement or safety evaluation?

- Can retention policies be contractually customized?

- How are backups purged?

- What deletion SLAs apply?

Why it matters:

Courts are beginning to scrutinize AI-generated records. If logs exist, assume discoverability.

4. IP & Dataset Provenance

Ask:

- What datasets were used to train or fine-tune the model?

- Are those datasets licensed? Can you provide documentation?

- Do you maintain a labeled dataset inventory (source, jurisdiction, license type)?

- Were any datasets scraped? If so, under what authority?

- How do you handle takedown requests?

- Is there an opt-out or recall mechanism for rights holders?

- What happens if a dataset becomes legally challenged?

Why it matters:

IP litigation risk is migrating from content output to training lineage.

5. Security & Access Architecture

Ask:

- Who inside your organization can access customer data?

- Is access role-based and auditable?

- Is data encrypted at rest and in transit?

- Are tenants logically or physically segregated?

- What is your breach notification timeline?

- Do you support private or air-gapped deployments?

Why it matters:

Security posture determines both regulatory exposure and client trust.

6. Litigation Readiness & Discoverability

Ask:

- Can prompts and outputs be exported for legal hold?

- Who controls preservation obligations?

- Can logging be adjusted in anticipation of litigation?

- Are reproducibility logs available?

- If compelled by subpoena, what records exist?

Why it matters:

AI systems generate records. Those records will be examined.

The Vendor Contract Checklist

Due diligence is incomplete without enforceable protections.

Below is a practical, drop-in checklist suitable for RFPs or AI procurement frameworks.

IP & Dataset Provenance Protections

Require:

- Full model lineage disclosure (base, fine-tunes, adapters)

- Ownership or licensed rights to weights

- Labeled dataset inventory including:

- Source

- Date range

- Jurisdiction

- License type

- Acquisition method

- Removal rights

- Source

- Written attestation that no data was obtained in breach of contract, ToS, or law

- SBOM (software bill of materials)

- Documented opt-out and recall processes

- Takedown workflow documentation

- Confirmation that safety filters do not ingest customer content beyond necessity

- Model update notes when rights issues arise

Core Contract Protections

Representations & Warranties

- Lawful sourcing of training data

- No hidden training on customer data

- No knowing infringement, defamation, or disclosure of confidential information

Indemnities

- IP infringement coverage including defense costs

- Privacy and data claim coverage

- No cap (or meaningful super-cap) on IP indemnity

- Vendor as first-line responder

Customer Data Protections

- Customer retains ownership of all data

- No training without explicit, revocable opt-in

- Strict segregation of environments

- Breach notice within 48 hours

- Deletion within 30 days upon request

Governance Controls

- Audit rights

- Delivery of model cards and SBOM

- Notice of material model updates

- SLA commitments with fallback models

- Export rights for prompts and outputs

- Step-in rights if critical licenses are lost

AI systems must be contracted as infrastructure, not as productivity tools.

Infrastructure Awareness

Some firms — particularly those handling sensitive regulatory or governmental matters — are exploring private AI infrastructure.

Infrastructure providers such as SambaNova offer:

- On-prem or controlled AI environments

- Reduced hyperscaler dependency

- Greater data containment

Not every firm requires this. But understanding infrastructure options strengthens negotiating leverage.

Closing Perspective

The difference between a tourist and an insider is simple:

A tourist evaluates capability.

An insider evaluates control.

AI adoption is inevitable. Governance is optional — and therefore differentiating.

Ask the harder questions.

Control the contractual layer.

Understand what persists.

That is how legal institutions adopt AI without inheriting avoidable risk.